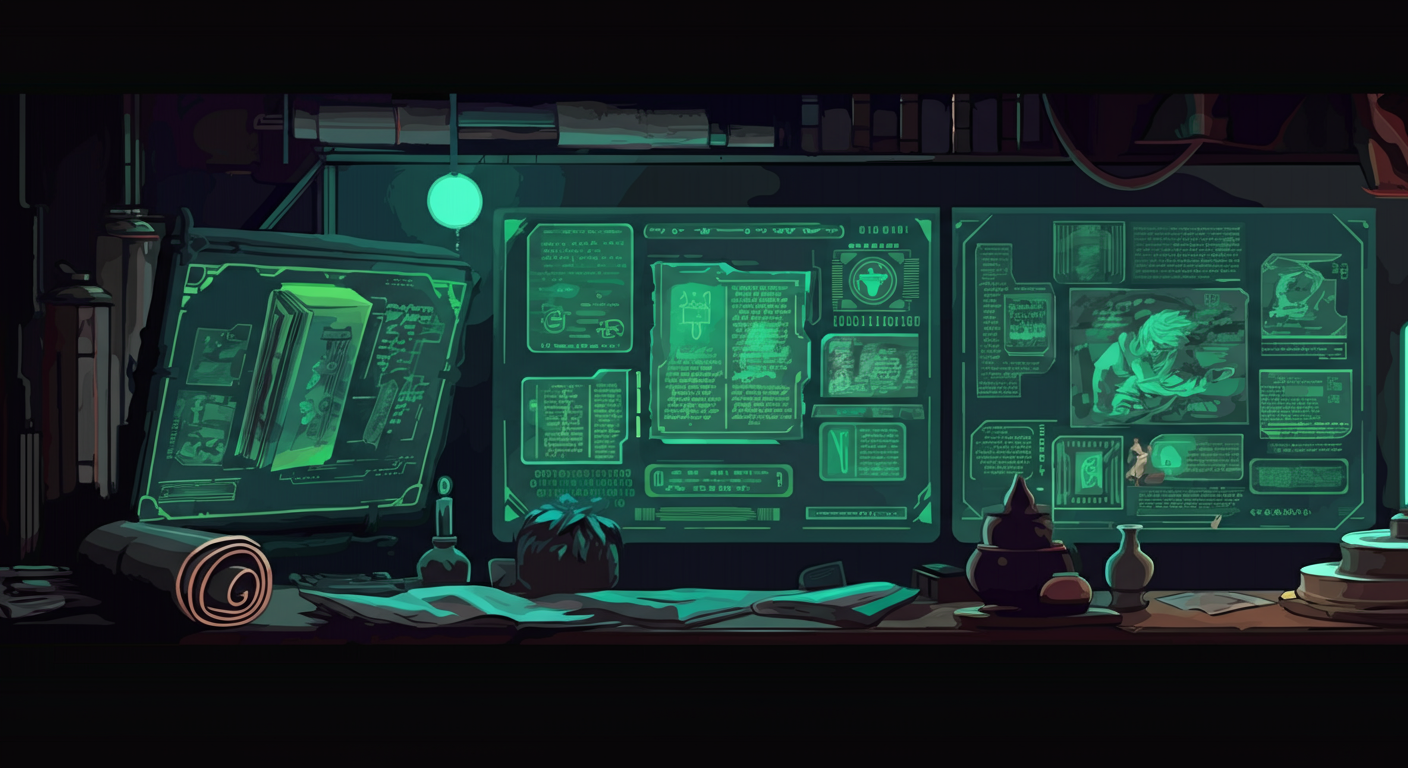

The Codex

Essential Guides

Docker for AI Agents

How to run AI agents in Docker containers with proper resource limits, GPU passthrough, and network isolation.

Coming soon.

Local Inference

GGUF quantization, Ollama, vLLM, llama.cpp - choosing the right inference engine.

Fine-tuning

LoRA, QLoRA, DPO - fine-tuning approaches for custom AI agent behavior.

Security & Sandboxing

How to safely test AI tools without risking your host system. Container isolation, network restrictions, and monitoring.

The Codex is a living document. Guides are updated as the AI landscape evolves. Always verify commands and configurations against your specific environment.